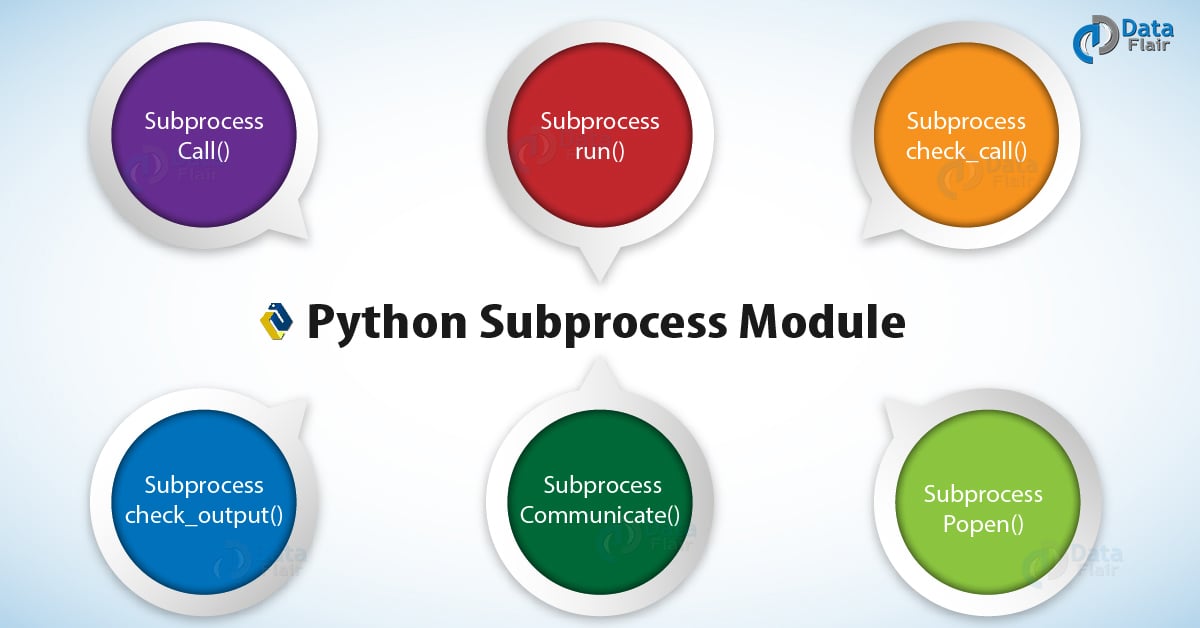

See the Replacing Older Functions with the subprocess Module section in the subprocess documentation for some helpful recipes.

The subprocess module provides more powerful facilities for spawning new processes and retrieving their results using that module is preferable to using this function. The final approach is also the most versatile approach and the recommended module to run external commands in Python: To remove those characters only in the beginning use. strip() function like with output.strip(). To remove them (including blank spaces and tabs in the beginning and end) you can use the. In this example and in the following examples, you will see that you always have trailing line breaks in the output. To delve deeper into this function, have a look at the documentation. It is also possible to write to the stream by using the mode='w' argument. readlines() function, which splits each line (including a trailing \n). read() function, you will get the whole output as one string. These simple but very powerful lines of code allow to interact with HDFS in a programmatic way and can be easily scheduled as part of schedule cron jobs.When you use the. z: if the file is zero length, return 0.Ĭmd = HDFS Command to remove the entire directory and all of its content from HDFS. Hdfs dfs -rm -skipTrash /path/to/file/you/want/to/remove/permanently Run Hadoop copyFromLocal command in Python Proc = subprocess.Popen(args_list, stdout=subprocess.PIPE, stderr=subprocess.PIPE)ģ-Examples of HDFS commands from Python Run Hadoop ls command in Python

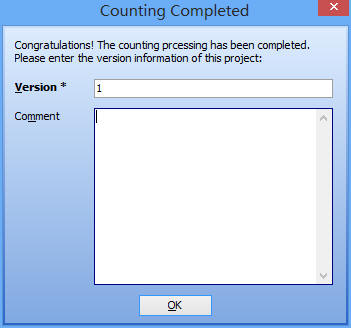

Print('Running system command: '.format(' '.join(args_list))) It is passed as a Python list rather than a string of characters as you don't have to parse or escape characters. We will create a Python function called run_cmd that will effectively allow us to run any unix or linux commands or in our case hdfs dfs commands as linux pipe capturing stdout and stderr and piping the input as list of arguments of the elements of the native unix or HDFS command. Or we can use the underlying Popen interface can be used directly. The recommended approach to invoking subprocesses is to use the convenience functions for all use cases they can handle. To run UNIX commands we need to create a subprocess that runs the command. connect to their input/output/error pipes.The Python “subprocess” module allows us to: Interacting with Hadoop HDFS using Python codes

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed